In a move that makes one think of a Steven Spielberg film, UK police have been using artificial intelligence to predict crime. According to Mirror Online UK police have used forms of “predictive policing” since at least 2004, but development of more advanced machine learning has lead to in increase in the use of AI by police.

UK police are reportedly using AI for functions including facial recognition, social media surveillance, wide predictive crime mapping and on an individual level, risk assessment. A new report by the Royal United Services Institute (RUSI) warns these AI’s have human biases built into their programming. The RUSI report warns that machine learning algorithms are resulting in discrimination against people based on race, sexuality and age.

Machine learning is used to train an AI against a data set with known outcomes. When MIT built an AI to predict a patient’s risk of cardiovascular death, the AI was trained with historical data of patient outcomes. For crime prediction the AI is trained with past crime data, that critics argue is subject to past human biases.

A police officer interviewed in the RUSI report said, “Young black men are more likely to be stop and searched than young white men, and that’s purely down to human bias.” – When you feed that data to an AI it risks creating a self-fulfilling prophecy. Stop someone who fits a profile more often, the result is that people in that profile are arrested and convicted more frequently, justifying that demographic being further subjected to scrutiny from the AI.

Because AI’s are basically built in the image of the data set’s the creators feed them, we’ve seen biest in real time. In 2016 a Microsoft artificial intelligence chatbot became racist after only a few hours. The AI was trained by users comments and interactions (on the cesspool that is Twitter), and this video compiled some of the craziest tweets from the bot.

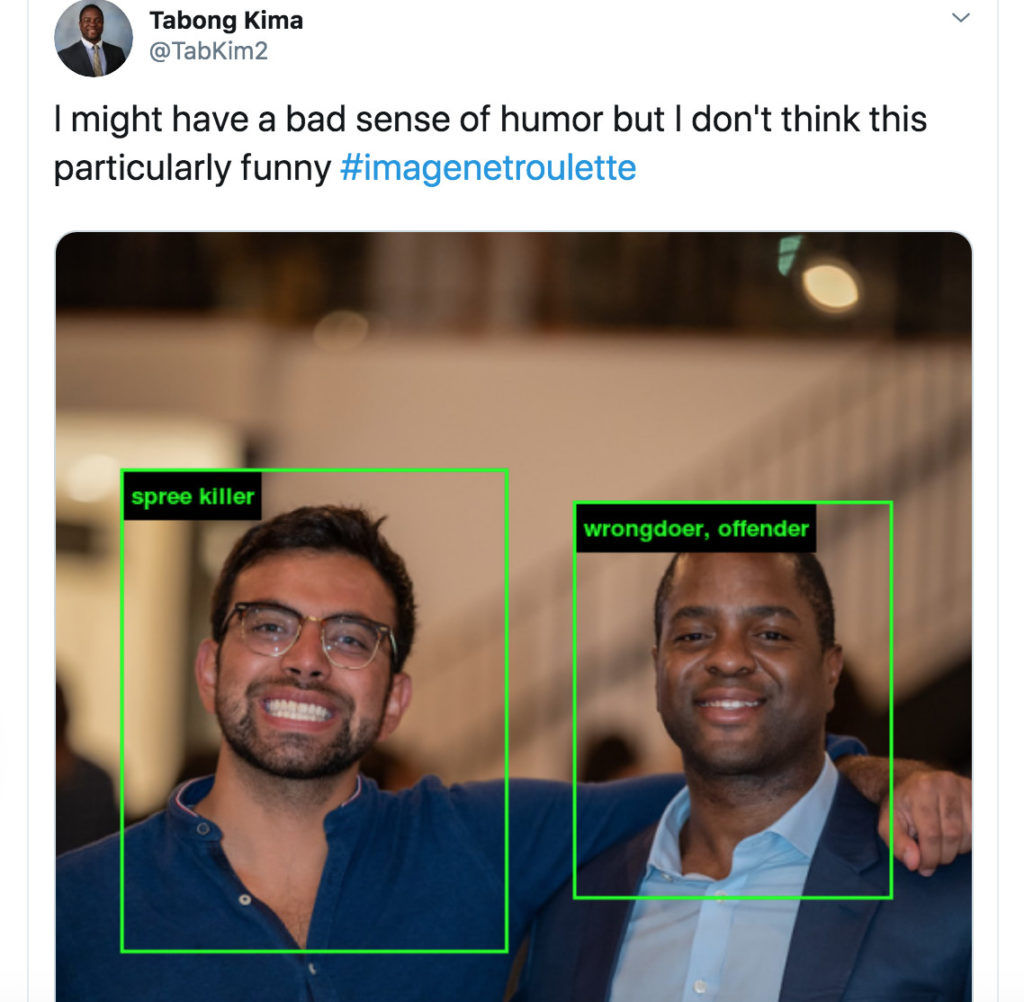

Further Two artist set out to prove a point about AI. Trevor Paglen and Kate Crawford created ImageNet Roulette, a website that matches user uploaded selfies with closely resembling photos from a large library. The AI will then show the most popular tag, assigned to the matching pictures by human made data set from WordNet.

The library of tagged pictures was labeled by people paid from a Amazon’s Mechanical Turk. Users are given a task, example “label this photo: and paid a few pennies for each completed item. Some of the labels have included racial slurs, along with terms like “rape suspect” and “spree killer”.

The art project and Microsoft’s earlier chatbot serve as a pretty strong warning about the dangers of AI with bad training data. Claims by those who support the police use of AI for crime prevention are often that AI, can eliminate human subjectivity. I personally don’t think we’ve seen evidence of that being the case, but I’ve seen a lot of bad robots.

Header Image: Futurama

Mason Pelt, is a guest author for Internet News Flash. He’s been a staff writer for SiliconANGLE and has written for TechCrunch, VentureBeat, Social Media Today and more.

He’s a Managing Director of Push ROI, and he acted as an informal adviser when building the first Internet News Flash website. Ask him why you shouldn’t work with Spring Free EV.

Comments are closed, but trackbacks and pingbacks are open.